- 22 April, 2024

- By Dave Cassel

- No Comments

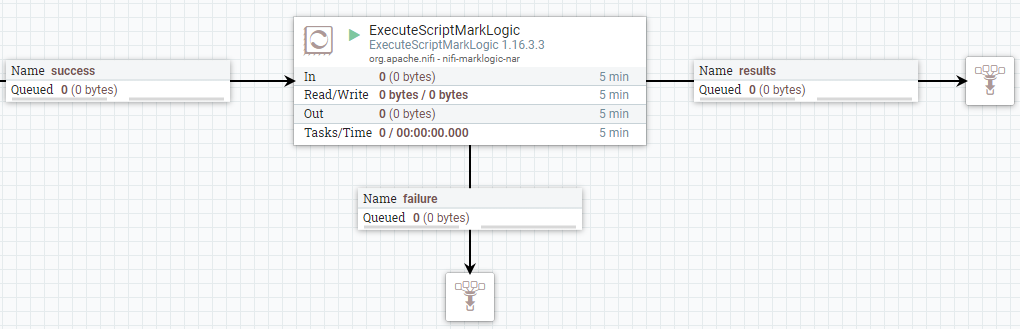

In one of my recent posts, I talked about ExecuteScriptMarkLogic, a handy processor for getting Apache NiFi to talk to Progress MarkLogic. I’d like to share a couple little “gotchas” that we’ve run into before. I’m using ExecuteScriptMarkLogic to illustrate the point, but it applies to any processor where we use JavaScript code in the […]